When I was young, I learned to type on a manual typewriter. Every mistake meant reaching for liquid white-out or correction tape. I could see exactly where I'd messed up thanks to the little bumps and shadows on the page.

Then I got an IBM Selectric with its correction feature, and something fascinating happened: When I made a mistake and corrected it, the page looked perfect…and I stopped reading my work critically.

The absence of visible errors made my brain treat everything as correct. I'd miss logical flaws, awkward phrasing, and even factual mistakes—things I would have caught immediately before. The polish created a false sense of quality; now, years later, I’m watching the same phenomenon happen with AI.

The problem isn’t what you think. When leaders tell me their teams aren't getting value from AI, they think it's a skills problem. They frame it as “We need better prompt engineering training," or "People don't understand the tools."

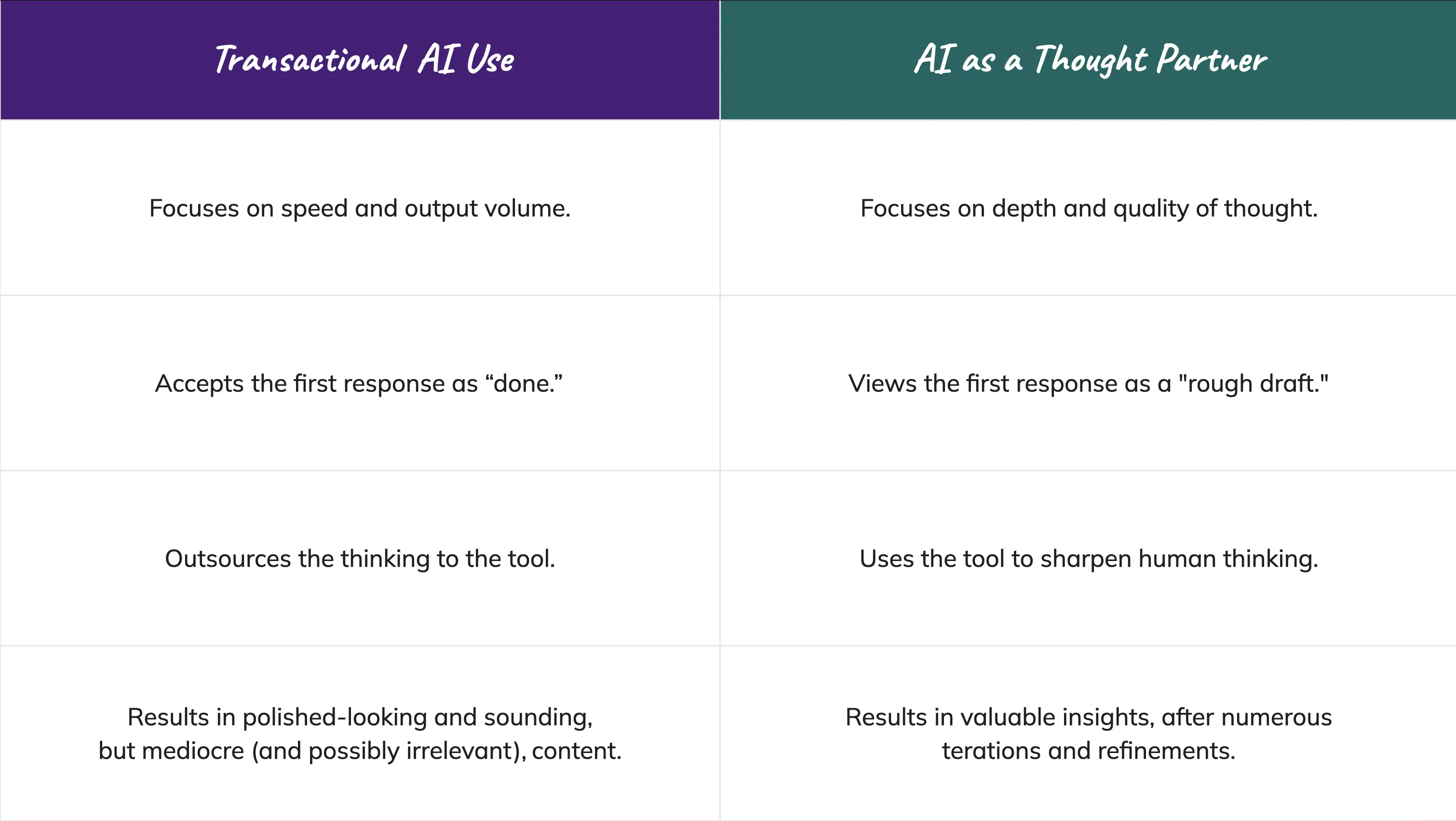

But I see something else. Their people are stuck in two mutually reinforcing AI productivity traps:

When you use AI transactionally, you never develop the judgment you need to evaluate its output. And when you trust the polish too much, you never push back enough to have the human-AI conversation that makes the AI tool worth using.

The result? Teams generate confident-sounding content that doesn't actually innovate, advance their work, or add value.

With AI, there’s no such thing as a rough draft. It gives you complete sentences, proper formatting, confident

assertions, and professional-sounding language. There are no typos, crossed-out words, or insertions. It looks right. And that shuts down our critical thinking.

Think about how you'd respond if a colleague sent you a messy draft with handwritten notes in the margins and "I'm not sure about this part" scrawled at the top. You'd read it critically, following the thought process that unfolds on the page. In doing so, you'd build on the ideas. You’d question the logic. You'd push back. In short, you’d have a conversation.

Now imagine the same colleague sent you a polished document with perfect formatting, confident language, and a tidy presentation. The polish signals "this is done" rather than "this needs more thought." Our brains have a cognitive bias toward viewing the polished version as final, even when the thinking is shallow or absent.

Here's the kind of AI use I see all too often from well-meaning L&D folks: “Give me three learning objectives for a course on delegation.”

In response, AI dutifully delivers three objectives that sound professional and are nicely formatted. Transaction complete. Next task.

But those objectives are probably generic. They probably don’t align with actual business outcomes. They probably address delegation in theory while missing the specific challenges this team faces.

The unwitting user has no way to know because they never pushed further. They asked for three

objectives and got three objectives. They never questioned whether these were the right objectives, and they missed the opportunity to explore alternatives, provide context, iterate, refine, or test whether the AI output actually solves the problem.

The teams getting real value from AI work with it completely differently. They treat it like an AI thought partner—someone smart but in need of context, constraints, and a push to do their best work.

Here’s what AI thought partnership looks like for these teams:

Using AI as a thought partner isn’t more difficult than transactional use; it’s just a different strategy. It requires you to think while you work with AI rather than outsourcing the thinking entirely.

To avoid the Selectric effect, you have to make it visible.

Teams who are able to break through the Selectric effect aren’t simply AI power users—they’re better thinkers.

By questioning AI outputs and thinking critically about whether they address the need, they become better at questioning their own thinking. In the process of providing clear context for their AI thought partners, they improve their ability to articulate their own implicit knowledge and ideas.

AI is a true thought partner not because it thinks like a fellow human, but because working with it well requires us to think more clearly ourselves. A truly effective human-AI pairing is founded in what we call the unpromptable: inimitable human skills like insight, judgment, and vision.

Got a vision of your own for adding AI literacy and thought partnership to your people’s human skills? We’d love to talk shop! Reach out to explore the possibilities.